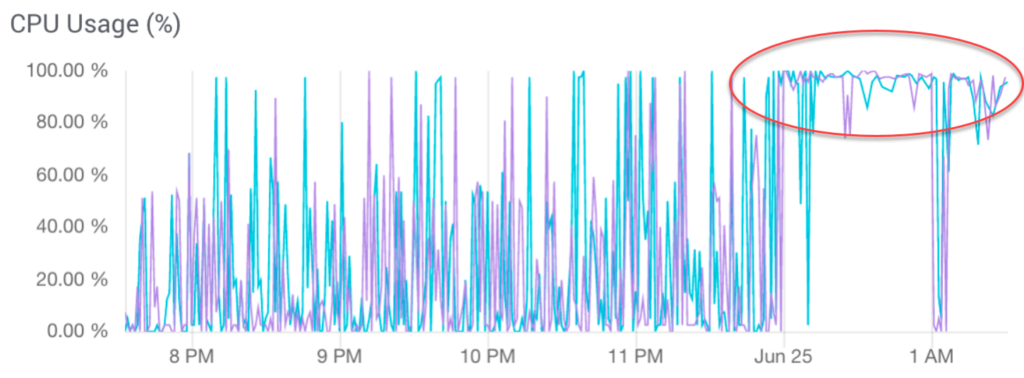

Solr administrators sometimes overload their systems by asking Solr to index too many records in a single batch. CPU levels max out at 100% for extended periods. This can cause service outages as one replica after another goes into recovery mode for no visible reason.

Zookeeper checks the status of each replica every few minutes. When this process times out due to CPU overload, Zookeeper assumes that the replica’s server is down. It puts the replica into “recovery mode” while it plays back all recent changes to update the replica. The replica is unavailable to the system until this process is complete.

Replica recovery places additional burdens on the CPU, which further interferes with Zookeeper’s attempts to monitor the system. One replica after another goes into recovery. Cascading failures bring down the whole system.

The immediate cure is to stop Solr and then restart it. This interrupts ingestion. The replicas quickly come back on line.

To avoid this behavior, adjust the ingestion batch size to give Zookeeper adequate access to the CPU.

Questions?

Do not hesitate to contact the SearchStax Support Desk.